Virtual Emotion Mirror

AI-Driven Facial Emotion Recognition & Personalized Content System

Motivation

Explore how facial emotion recognition enables emotion-aware personalization and how emotional data can provide self-awareness insights over time.

Solution

Hybrid architecture: React frontend performs face detection, Python backend classifies emotions (Happy, Sad, Angry, Surprised, Neutral), NestJS orchestrates. Maps emotions to content recommendations and tracks patterns over time for analytics.

- Hybrid Architecture – Client-side face detection reduces latency; server-side emotion classification ensures accuracy. NestJS orchestrates, Python handles inference.

- Real-Time Emotion Pipeline – Face detection → feature extraction → emotion classification (5 emotions) → temporal smoothing for stable predictions.

- Personalized Recommendations – Maps emotions to content: calm/uplifting music for stress/sadness, high-energy for happiness. Movies filtered by emotional compatibility.

- Emotional Analytics – Tracks patterns over days/weeks/months, identifies mood cycles and stress trends for self-awareness insights.

- Scalable Design – Modular services enable independent scaling of frontend, inference, and data storage.

- Real-Time Detection – Live facial expression analysis with minimal latency

- Hybrid Architecture – Client-side preprocessing, server-side inference for performance and scalability

- Personalized Recommendations – Music and movies adapt to emotional state in real time

- Emotional Analytics – Tracks patterns, mood cycles, and stress trends for self-awareness

- Temporal Smoothing – Stable predictions by averaging across time windows

Design Process

Architecture optimized for real-time performance and scalability. Key decisions: client-side face detection to reduce backend load, batched inference requests, hybrid architecture balances speed and accuracy, modular services enable independent scaling.

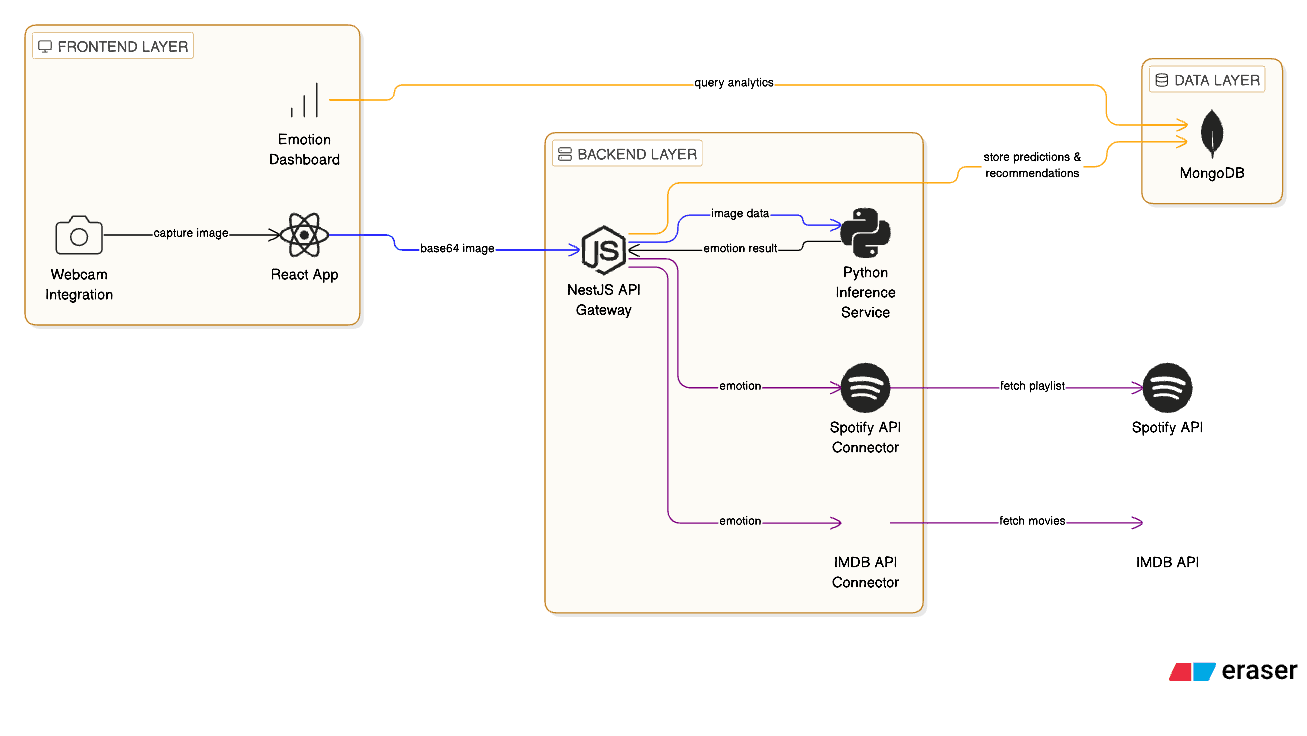

System Architecture

Three-layer architecture: React frontend for client-side face detection, NestJS backend for orchestration, Python service for emotion classification, MongoDB for data persistence. Offloading face detection to client reduces backend load and latency.

Hybrid architecture balances real-time performance and scalability. Modular design enables independent optimization and scaling of each component.

- Frontend: React.js with webcam integration, lightweight browser-based face detection

- Backend: NestJS API gateway, Python deep learning service for emotion classification

- Data: MongoDB for timestamped predictions, patterns, and trends

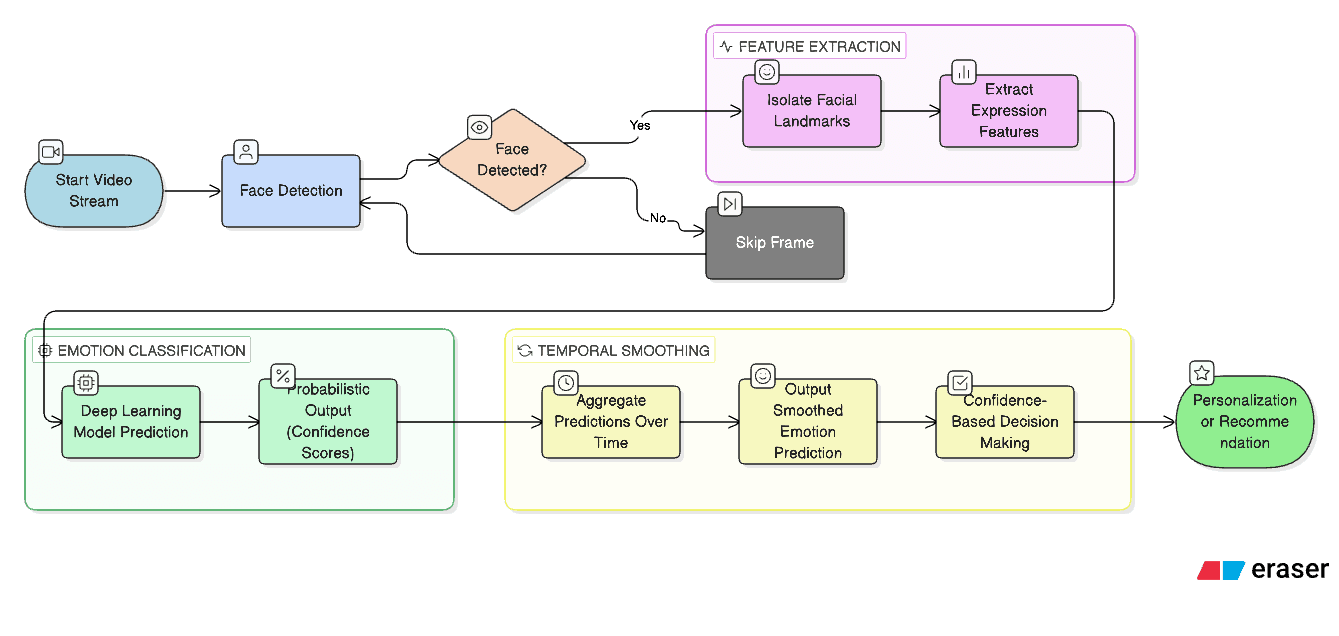

Emotion Recognition Pipeline

Four-stage pipeline: face detection → feature extraction → emotion classification (Happy, Sad, Angry, Surprised, Neutral) → temporal smoothing. Processes live video frames with probabilistic outputs and confidence scores. Temporal smoothing prevents abrupt changes from noisy frames.

Pipeline enables real-time emotion detection with stable predictions. Probabilistic outputs support confidence-based personalization.

- Face detection via browser webcam APIs

- Feature extraction: facial landmarks and expression features

- Emotion classification: TensorFlow models with confidence scores

- Temporal smoothing: averages predictions across time windows

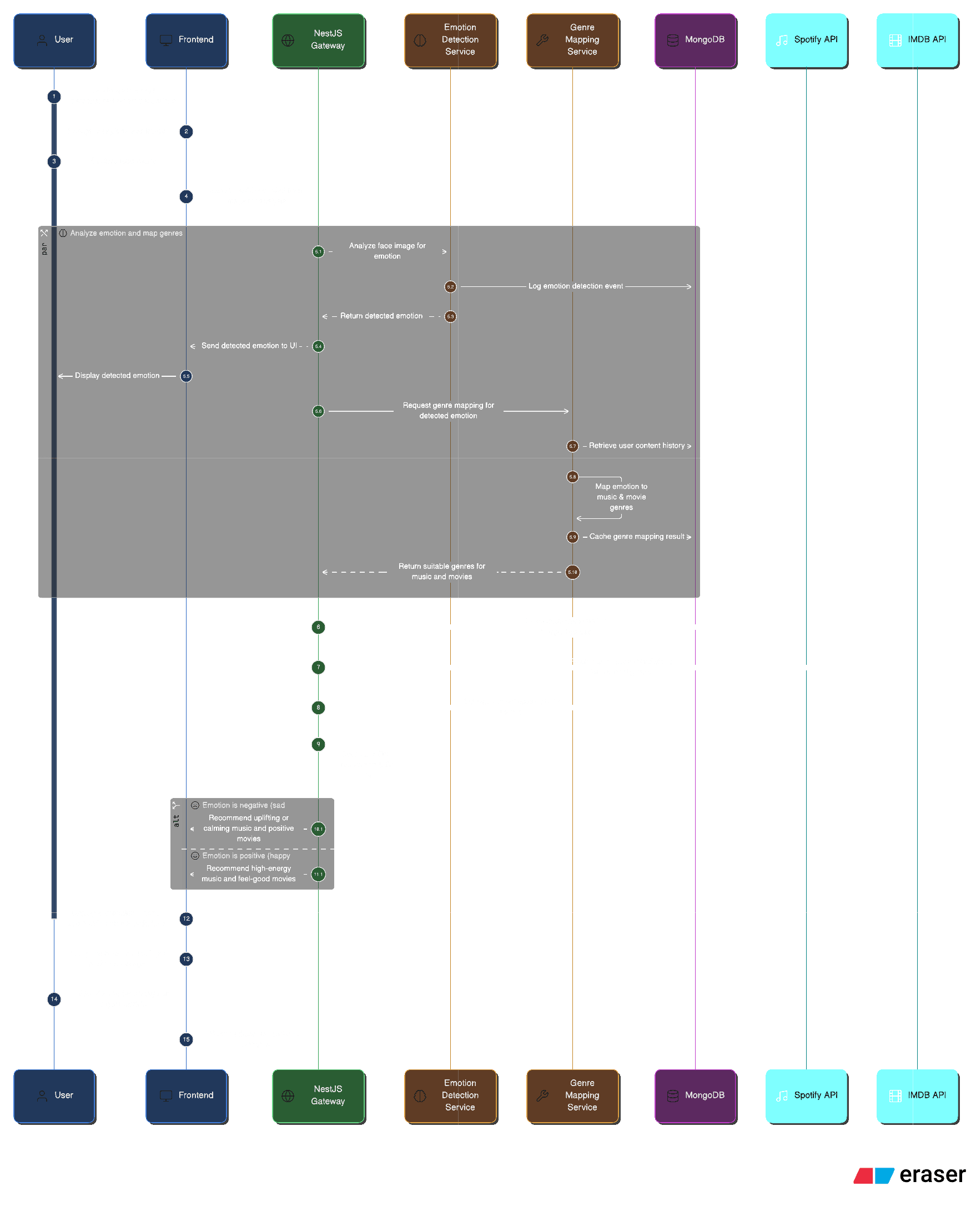

Recommendation Engine

Maps detected emotions to content suggestions. Music: calm/uplifting for stress/sadness, high-energy for happiness. Movies: genre filtering by emotional compatibility. Updates in real time as emotional state changes via feedback loop.

Engine creates adaptive recommendations that respond to current emotional state, not just historical behavior. Feedback loop keeps content relevant to user's mood.

- Emotion-to-content mapping for music and movies

- Real-time updates as emotional state changes

- Integration with Spotify and IMDB APIs

- Caching and optimization for fast retrieval

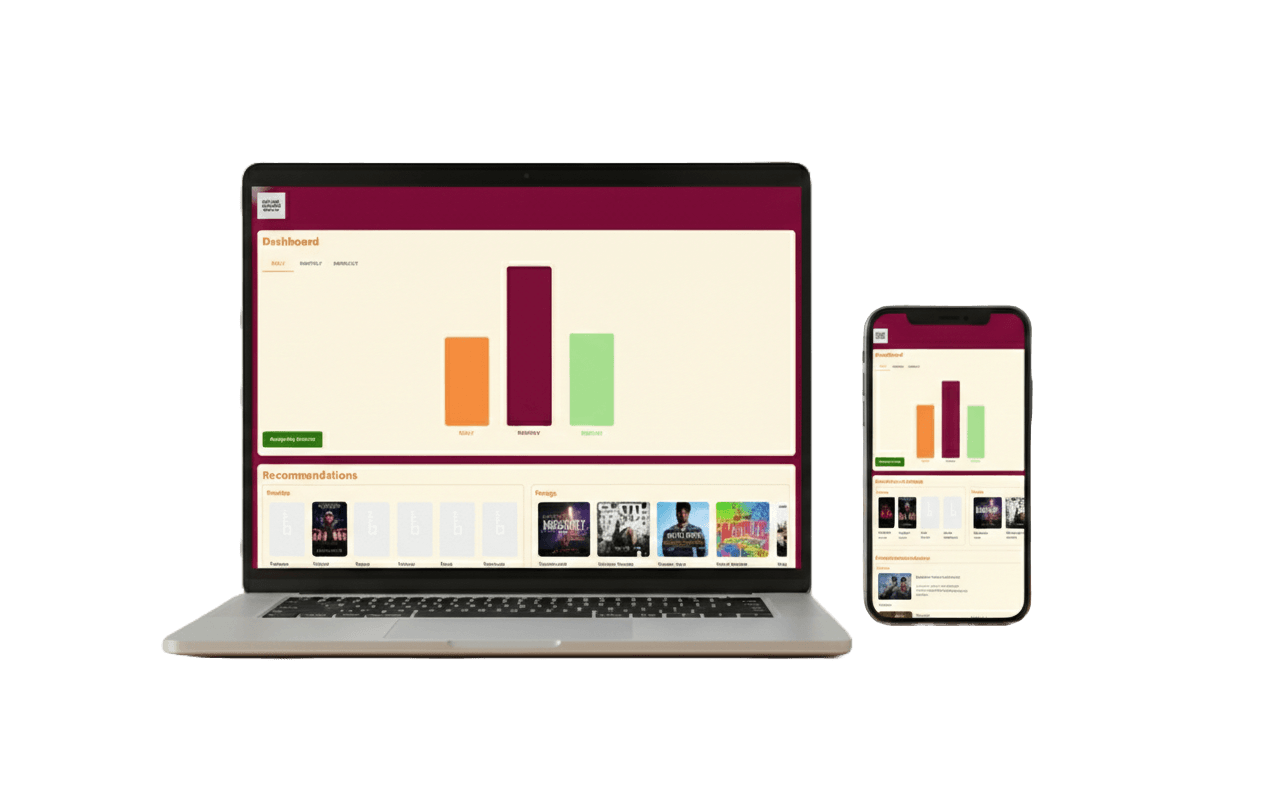

Emotional Analytics

Transforms raw emotion data into insights. Tracks distribution over days/weeks/months, identifies recurring patterns, mood cycles, and stress trends. Dashboard visualizes trends for self-awareness and well-being reflection.

Analytics layer provides long-term value beyond real-time recommendations, enabling users to understand emotional patterns and reflect on well-being.

- Tracks emotional distribution over time

- Identifies recurring patterns and mood cycles

- Visualization dashboard for trends and insights

- Privacy-preserving aggregation

Implementation

React frontend, NestJS backend, Python inference service, MongoDB data layer. Key solutions: client-side preprocessing, batched inference requests, temporal smoothing, modular architecture. Achieves real-time performance with minimal latency and scalable design.

Production-ready system with real-time emotion detection, accurate predictions, and scalable architecture. Modular design enables independent optimization and scaling.

- Frontend: React.js with webcam integration, browser-based face detection

- Backend: NestJS API with authentication and orchestration

- Inference: Python TensorFlow models for emotion classification

- Data: MongoDB for predictions, patterns, and trends

Reflection

Outcomes

Successfully built a production-ready hybrid AI architecture integrating deep learning into real-time web apps. Demonstrated real-time emotion detection with personalized recommendations and long-term insights. Explored ethical and technical considerations of emotion-based systems, validating feasibility of emotion-aware applications.

If I had more time

Future: multi-modal detection (voice + facial), on-device inference for privacy, emotion-aware UI themes, advanced analytics dashboards with sophisticated pattern recognition.